In recent years, the large-scale US military operation against Iran (scheduled to begin in late February 2026) has attracted considerable attention. This operation is reported to have utilized an AI system that instantly analyzes vast amounts of data to identify and prioritize targets. At the heart of this system is Palantir Technologies’ software, particularly the “Maven Smart System.” While some conspiracy theories suggest President Trump was “deceived” into the war, the reality is more complex, highlighting the dangerous link between technology and power.

Palantir’s Role: AI-Accelerated “Kill Chain”

Palantir is a data analysis specialist company associated with Peter Thiel (co-founder of PayPal and a Trump supporter), originally established with CIA funding. In the Iran operation, the company’s Maven Smart System integrated battlefield data, and AI (partially integrating Anthropique’s Claude model) proposed targets. This reportedly enabled the US military to execute thousands of precision strikes in a short period. Palantir’s CTO has characterized this as “the first large-scale conflict led by AI,” boasting of a dramatic improvement in decision-making speed.

In fact, reports have been circulating since the start of operations that Maven played a “crucial role” in target selection. Although humans make the final decisions, it is highly likely that AI recommendations accelerated the “kill chain” (the process from discovery to destruction), reducing a task that previously took several days to just a few hours. While some are investigating AI involvement in civilian casualties such as school bombings, Palantir emphasizes that it is “the military’s responsibility.”

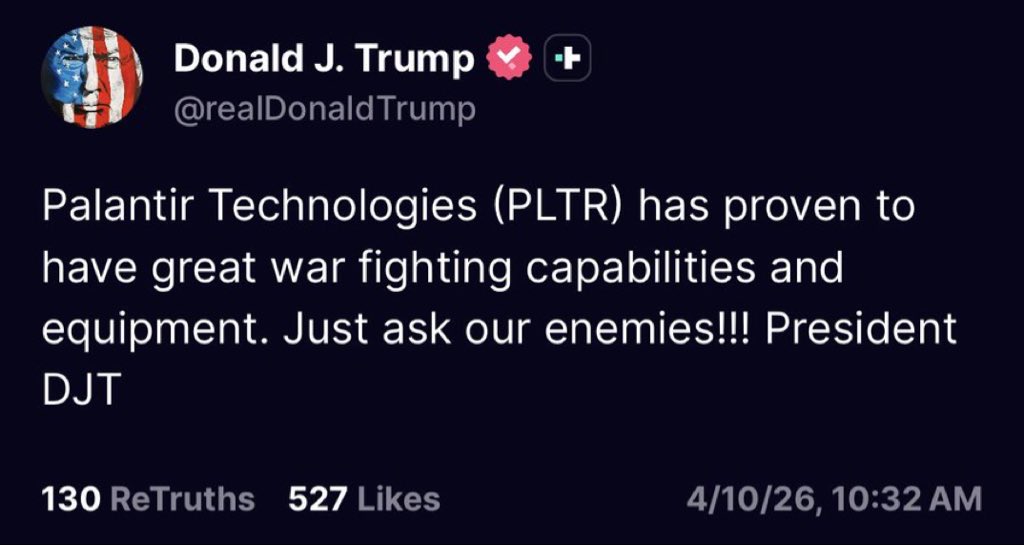

Under the Trump administration, Palantir’s contracts have expanded, and there are moves to formally adopt Maven for the entire U.S. military. It is clear that Thiel’s influence and Trump’s emphasis on AI are behind this. However, the claim that “the president was deceived by AI” is weakly supported. Trump has publicly praised Palantir’s combat capabilities, and it can be said that this is a result of actively utilizing the technology. AI is a “tool,” not something that “causes” war on its own. The decision-making is still done by humans (the president and military leaders).

Is Palantir’s software a “tool for starting wars”?

Now, here’s the main point. Palantir’s technology has been used not only in Iran, but also in past wars in Iraq and Afghanistan, Israeli military operations in Gaza and Lebanon, and the conflict in Ukraine. While it is said to have “proven effective” in tracking terrorists and countering IEDs (improvised explosive devices) through data integration and predictive analytics, concerns about civilian surveillance and mass targeting persist.

Advantages: It instantly connects vast amounts of information (satellite imagery, communications data, social media, etc.) to predict threats. It is claimed to reduce human bias and enable precision strikes. Palantir CEO Alex Karp has publicly stated that it “gives the West an advantage” and even says it is “a source of pride” for its use in war.

Dangers: There is a risk that AI “recommendations” will preempt human judgment and amplify attacks based on misinformation. Palantir’s systems were also used in the IAEA’s (International Atomic Energy Agency) monitoring of Iran’s nuclear program, leading to accusations that this provided a “pretext” for attack. Furthermore, conflicts of interest (revenueing enormous profits from military contracts while potentially fueling conflict?) have been criticized. In Europe, there has been rejection due to concerns about human rights violations.

The view that Palantir’s software could be a “tool for instigating wars around the world” is a realistic concern that goes beyond conspiracy theory. While the technology itself is neutral, its deep ties to military and intelligence agencies facilitate the escalation of conflicts. If AI accelerates the “kill chain,” it could reduce the time available for diplomatic solutions and lead to accidental escalation. The Trump administration’s favoritism towards Palantir while excluding Anthropic (developer of Claude) as “left-wing” also highlights the danger of over-reliance on military applications for specific technology ecosystems.

Furthermore, considering Peter Thiel’s influence (his connections to Trump’s circle and Japanese politics), a structure emerges where corporations indirectly intervene in national decision-making. Palantir positions itself as a “tool for winning wars,” but it needs to be examined whether it’s becoming a “tool for increasing wars.”

Conclusion: The duality of technology and human responsibility

It’s not as simple as President Trump being “deceived by Palantir’s AI.” Rather, the escalation of the Iran war is a result of actively incorporating AI into the military and enabling rapid operations. However, it is true that software provided by companies like Palantir is changing global conflict patterns. A world where data “predicts” everything and attacks “streamline” is fraught with ethical and strategic pitfalls.

We should guide AI toward supporting peaceful decision-making rather than using it as a “tool” of war. It is crucial to monitor whether Palantir’s technology truly contributes to “global stability” or becomes a spark for a new arms race. Technological progress is inevitable, but we must continue to demand transparency and responsible use so that humans do not lose control.

(This blog is an analysis based on publicly available information. Many details of military technology are classified, and this does not cover all aspects. We welcome your comments.)